Apple SVP Craig Federighi Concedes That Apple Failed to Communicate New Child Safety Features Properly

Apple

Apple

Toggle Dark Mode

Apple has been experiencing some pretty hard blowback following its announcement of several new child safety initiatives last week, ranging from privacy advocates with somewhat legitimate concerns about misuse of the new technology to end users who fear that Apple has now reversed course on its privacy initiatives.

As we outlined last week, however, nothing could be farther from the truth when it comes to these fears. Apple has built a very sophisticated system that’s actually designed to enhance user privacy by opening the door to even stronger protections for users’ iCloud Photo Libraries and other personal information.

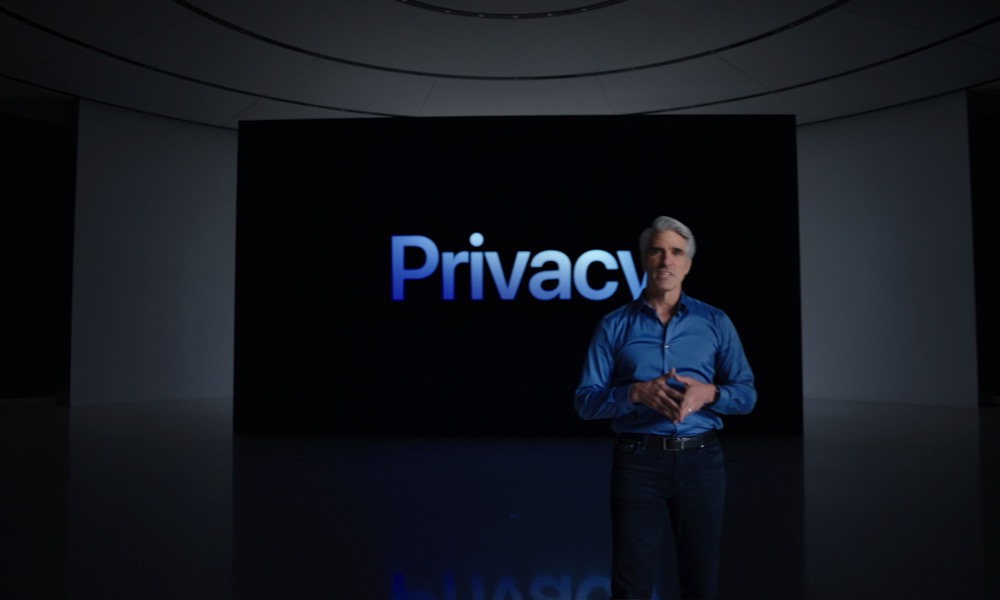

That said, we can truly understand how many users may have been alarmed by Apple’s announcement, and it appears that the company’s Senior VP of Software Engineering, Craig Federighi, agrees that Apple made a pretty big blunder when it came to presenting its new initiatives.

What We Have Here Is a Failure to Communicate

In an exclusive interview with The Wall Street Journal’s Joanna Stern, Federighi candidly admitted that Apple failed to communicate the new initiatives properly, creating a “recipe for confusion.”

We wish that this had come out a little more clearly for everyone because we feel very positive and strongly about what we’re doing, and we can see that it’s been widely misunderstood.

Craig Federighi, Apple Senior VP of Software Engineering

Key to the problem, Federighi says, was the mixed messaging the resulted from Apple combining the announcement of two related, but fundamentally different features: CSAM Detection and Communication Safety in Messages.

I grant you, in hindsight, introducing these two features at the same time was a recipe for this kind of confusion. It’s really clear a lot of messages got jumbled up pretty badly. I do believe the soundbite that got out early was, ‘oh my god, Apple is scanning my phone for images.’ This is not what is happening.

Craig Federighi, Apple Senior VP of Software Engineering

CSAM Detection, which stands for Child Sexual Abuse Material, is designed to scan photos on a user’s iPhone, although it does so in a very limited fashion, and ultimately doesn’t differ all that much from what Apple has already been doing on the back-end for well over a year now.

- Only photos being uploaded to iCloud Photo Library are scanned. No other on-device scanning of photos occurs, and there’s no scanning at all if iCloud Photo Library is not enabled on a user’s device.

- They’re only matched against a database of known CSAM images, provided by the National Center for Missing and Exploited Children (NCMEC).

- Matching images are silently flagged and encrypted in such a way that Apple has no knowledge of them until enough matches occur on a single account.

- Once a certain threshold of matches has been reached for a single account, Apple can examine very low-resolution thumbnails of the flagged images — and only the flagged images — to determine if they are in fact CSAM.

- If, and only if, the images are determined to be CSAM does Apple take any action, which includes disabling the user’s account and reporting them to the NCMEC.

Most importantly, Apple is not performing any kind of deep analysis of your photo library to find images that look like they might be CSAM. It’s looking for matches to specific images that have already been identified by the NCMEC as CSAM. The only way an innocent picture of your toddler in the bathtub is going to trigger Apple’s algorithm is if it’s already been shared around the dark web by child abusers.

By contrast, Communication Safety in Messages does perform analysis of photos, but it does so only to protect children from sending and receiving sexually explicit material in the Messages app.

- Communication Safety in Messages is only available for users under 18 years of age who are part of an Apple Family Sharing group.

- Parents must opt in to the feature to enable it for their kids.

- No scanning occurs at all unless the feature is enabled by the parents.

- Once enabled, the Messages app will use machine learning algorithms to attempt to identify photos containing sexually explicit material, such as nudity.

- When sexually explicit photos are received, they will be automatically blurred out, and the child will receive an age-appropriate warning about viewing the photo.

- If a child under 13 years of age chooses to view the photo, they will receive a second warning that their parents will be notified if they proceed.

- The same logic applies to sending sexually explicit photos, where children will be warned about sending them, and if a child under the age of 13 chooses to go through with the transmission of a sexually-explicit photo, they’ll be warned that their parents will be notified of their activity before proceeding.

Other than parental notifications for younger children, no additional information is ever transmitted beyond the child’s iPhone — this is strictly a child safety feature to help families, and is not intended to involve Apple or law enforcement.

Of course, by announcing both features at the same time — and in the very same document — it’s understandable why many otherwise reasonable people were thrown into a panic.

Many were led to believe that Apple was suddenly scanning all the photos that they send and receive in the Messages app — and everything else on their devices.

Apple already published a FAQ earlier this week to highlight these differences and respond to the concerns expressed by many users, and Federighi also clarifies what’s going on in his interview with the WSJ.

When Stern noted that criticisms of the feature “centered on the perception that Apple was analyzing photos on users’ iPhones,” Federighi responded that it’s “a common but really profound misunderstanding,” emphasizing that it only has to do with storing images in iCloud Photo Library.

This is only being applied as part of a process of storing something in the cloud. This isn’t some processing running over the images you store in Messages, or Telegram… or what you’re browsing over the web. This literally is part of the pipeline for storing images in iCloud.

Craig Federighi, Apple Senior VP of Software Engineering

In this context, the move is very obviously intended to prevent CSAM from being stored on Apple’s iCloud servers — something that other cloud storage providers from Google to Facebook have already been doing for over a decade by scanning their cloud servers directly. Apple’s goal here is simply to move that process to the front-end, scanning images on the user’s device before they get uploaded to iCloud.

Remember ‘BatteryGate’

To be fair, it’s not the first time Apple has made a big PR blunder by being a bit overzealous in its own cleverness. However, you’d also think the company would have learned from the massive backlash it received — and massive regulatory fines and legal settlements it had to pay out — as a result of its decision to throttle performance on iPhones with deteriorating batteries.

For those who don’t remember all the details, when Apple released iOS 10.2 in December 2017, it quietly added a feature that throttled the maximum performance on older iPhones, based on their battery health.

While Apple had excellent reasons for doing this — for many users it was the tradeoff between having a slightly slower iPhone and having one that died in the middle of an important photo or phone call — it failed to really tell anybody what it was doing.

This didn’t stop third-party developers and researchers from discovering what was going on, however, and as you might have expected, the internet went nuts almost overnight, with accusations of planned obsolescence and assumptions that Apple was making older iPhones slower to force users into more expensive upgrades.

In the midst of the scandal, Apple CEO Tim Cook apologized in an ABC News interview, and conceded that Apple probably wasn’t clear enough in explaining what was going on and what its motivations were.

Maybe we weren’t clear. We deeply apologize for anyone who thinks we have some other kind of motivation.

Apple CEO Tim Cook

Cook deflected some of that responsibility by saying that Apple did explain the changes to some media outlets following the release of iOS 10.2.1, but that people may not have been “paying attention.”

However, as governments and consumer protection agencies around the world began investigating the issue and class action lawsuits piled up, it became clear that they agreed that Apple could have done a much better job of informing its customers about what it was doing.

In the end, Apple was hit with fines in Italy and in France under the consumer protection laws of those countries — merely because it failed to communicate the new behaviour to its customers, many of whom may have opted to buy an entirely new iPhone when a $29 battery replacement would have resolved their problems. Only Brazil ruled in the opposite direction, with courts stating that Apple ultimately did nothing wrong, since it ultimately did disclose what it was doing, and offered to remedy the situation with discounted battery replacements.

Apple also agreed to pay a $500 million settlement in a U.S. class action lawsuit over the same issue, although as with most out-of-court settlements, it was able to deny any wrongdoing as a condition of the settlement.