The First visionOS Beta is Now Available to Developers

Toggle Dark Mode

Alongside the release of the second developer betas of Apple’s more mainstream operating systems like iOS 17, the company is also seeding out the first-ever beta of visionOS — the operating system that will power its Vision Pro mixed-reality headset.

While the hardware to actually install this new beta doesn’t yet exist outside of the walls of Apple Park, Apple has announced a set of developer tools to help those interested in building apps for the new headset get the process started.

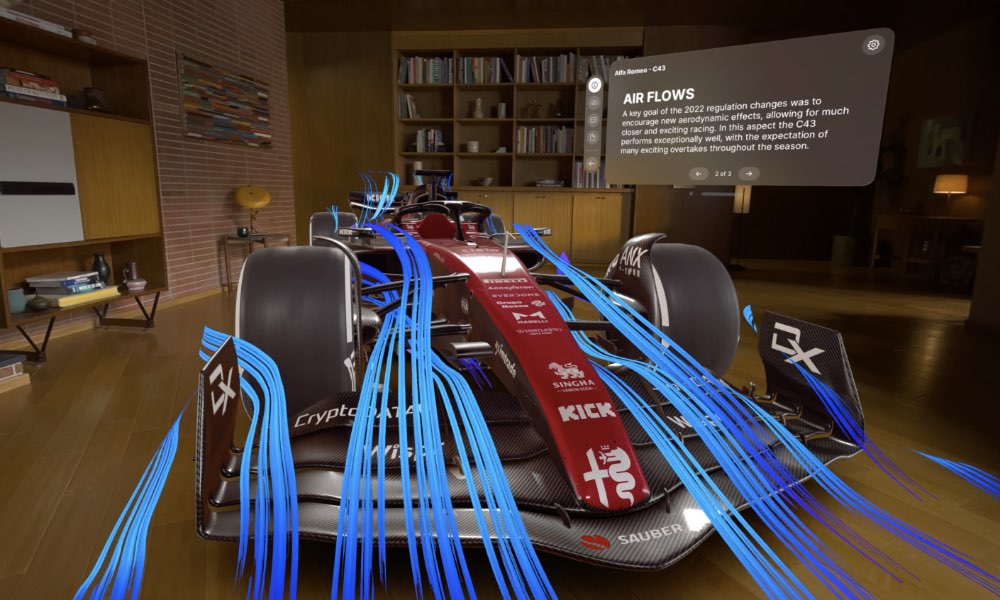

This includes a new “visionOS simulator” that can run visionOS in a window on a Mac to provide a replica of what a user would see when wearing the actual headset. This is not unlike the iPhone and iPad simulators that have long been available in Apple’s Xcode. However, due to the more sophisticated features of the Vision Pro, the visionOS simulator takes things a step further, with the ability to adjust room layouts, lighting conditions, and accessibility features. This will help to ensure the apps that developers are creating will transition more seamlessly to the actual headset once it becomes available.

Members of the Apple Developer Program will be able to use the same tools they’re already familiar with to build apps for visionOS, including Xcode, SwiftUI, RealityKit, ARKit, and eventually, the TestFlight platform to distribute pre-release beta versions of their apps. Apple is also introducing a new Reality Composer Pro tool in Xcode to let developers build 3D models, animations, images, and sounds for the Vision Pro.

Vision Pro Developer Kits

Although the tools available today will help developers get started, the good news is that they have to wait until the Vision Pro headset is available next year before they can test and polish up their apps on the actual hardware. Instead, Apple has a couple of ways to assist developers by providing them with access to Vision Pro headsets.

Since not every developer can justify a $3,500 headset, Apple plans to open special labs in a few key areas where developers can get hands-on with the Apple Vision Pro hardware. These will open next month in Cupertino, London, Munich, Shanghai, Singapore, and Tokyo. In addition to providing the headsets for developers to work with, Apple will also make its engineers available to work with developers in the lab.

For those who can’t travel to one of those cities or would rather have their own headset on-hand, Apple plans to make developer kits available that will include some version of the Vision Pro hardware for testing. It’s not yet clear how this will work, but it will likely be more complicated than just ordering one through Apple’s Developer portal; Apple notes that development teams will need to “apply for developer kits,” which will presumably require them to explain what they’re working on and why they might need one. Apple hasn’t yet released details on the application process or what these developer kits will cost.

More Insights into Apple’s Vision Pro

While the new tools will kick off an exciting season for developers, the release of the visionOS SDK and first beta also holds some interest for non-developers since it offers more insight into some of the features and capabilities of Apple’s upcoming headset.

For example, developers who have been poking around in the visionOS SDK have already discovered the Vision Pro “Guest Mode,” which will allow you to let other friends and family members use your headset without giving them access to your personal information.

The folks over at MacRumors have also discovered that Vision Pro will feature a “travel mode” to ensure a smoother experience when using the headset on a plane. This will deactivate some of the awareness features due to the closer proximity of passengers and flight crew, as well as tweaking a few other settings for a more comfortable and user-friendly experience.

Further, although Apple promised that the Vision Pro would be able to run existing iOS and iPadOS apps, it doesn’t plan to open the floodgates and make everything on the App Store available to the Vision Pro.

That’s understandable, as not everything that looks good on an iPad will look good through the Vision Pro. However, porting these apps over won’t be as simple as just tweaking a few settings and recompiling. There are a lot of iPhone and iPad features that won’t work the same way on the Vision Pro — if they even work at all.

We’ve already seen that the Vision Pro camera won’t be accessible to third-party apps, but it turns out that’s just the tip of the iceberg. Apple has published a list of several other iPhone and iPad features that are either hampered or unavailable when using a Vision Pro.

Some of these, like the selfie camera, are obvious when you think about it. Others are examples of Apple locking down various sensors for security or privacy reasons, including Core Motion services, barometer and magnetometer data, location services, and HealthKit data.

The Vision Pro also won’t support many of Apple’s ecosystem integration features out of the gate. This includes AirPlay, Handoff, Apple Watch features, Parental Controls, ScreenTime, and cellular telephony.

After reading that list, some developers may decide their apps aren’t worth trying to port to visionOS. However, Apple is offering its assistance to developers who want help testing their existing iOS apps for compatibility with the Vision Pro. Apple says developers can “request a compatibility evaluation from App Review to get a report on [their] app or game’s appearance and how it behaves in visionOS.”