Here’s What the New iPhone 12 Camera and LiDAR Scanner Array Could Look Like

EverythingApplePro

EverythingApplePro

Toggle Dark Mode

One of the biggest surprises when Apple unveiled its new 2020 iPad Pro last month was the addition of a LiDAR Scanner into the mix. Of course, we knew that Apple was working on a LiDAR or Time-of-Flight (ToF) camera system, but we expected it would come to the iPhone first.

Rumours had been swirling for a while that the new iPad Pro would gain a triple-lens camera system, similar to what was introduced on the iPhone 11 Pro last year. However, after Apple announced the new iPad Pro model, it quickly became apparent that earlier leaks had confused the LiDAR Scanner with a third camera lens, since the visual appearance is so similar.

That said, contrary to what some were expecting an iPhone LiDAR sensor to do, the implementation of it on the latest iPad Pro is all about Augmented Reality and actually has almost nothing to do with photography. In fact, the iPad Pro doesn’t even support Portrait Mode, at least not on the rear camera.

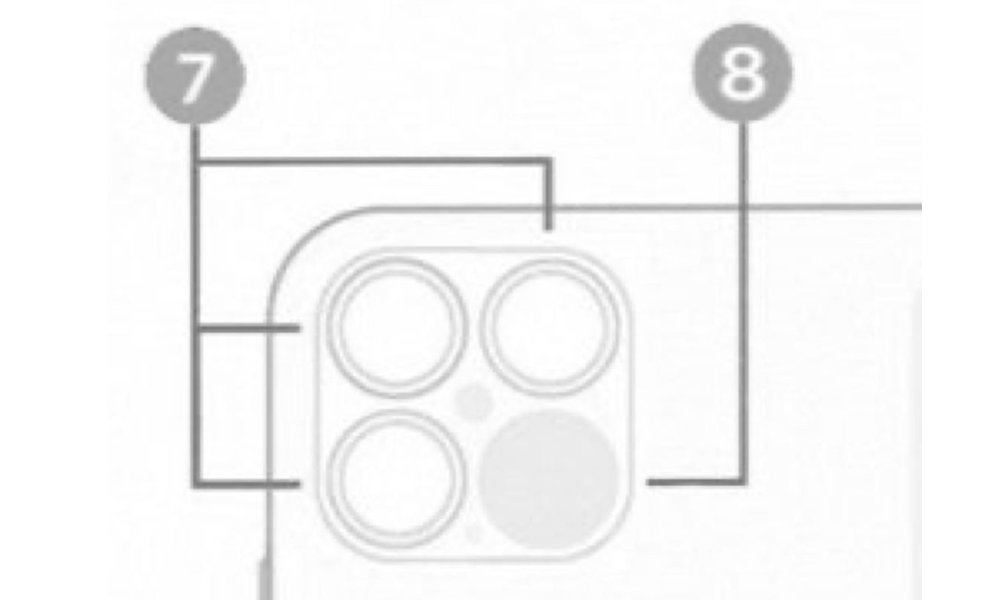

A Quad-Lens Camera Layout

Still, just because the iPad Pro has added the LiDAR Scanner with a focus on AR, that still doesn’t rule out the iPhone 12 joining the party later this year, where it could also be used for a wealth of even more advanced computational photography features, and now a leaked image from conceptsiphone, shared by Twitter user @Choco_bit, suggests that the camera bump on the iPhone 12 will expand to a full 2×2 grid to make room for the LiDAR Scanner.

Concepts iPhone notes that it obtained the image from iOS 14, although it appears to be something from a user guide or help screen, rather than a physical picture of the rear of a prototype iPhone 12, with numbered callouts that likely make reference the three cameras as a set, plus the LiDAR Scanner.

We’ve been hearing reports since 2017 that Apple had a laser-based 3D camera system in the works, so it’s probably not a big surprise that the iPhone 12 will gain this feature now that the iPad Pro has broken ground, and it’s safe to say that it will support the same ARKit 3.5 features that Apple has introduced on the iPad Pro. Whether it will be able to enhance the photographic capabilities of the new iPhones is a bit more difficult to say, but we have no doubt that Apple is working on it, and perhaps the reason that the iPad Pro got the LiDAR Scanner first was to clear the path for the ARKit features first and give Apple more time to design the computational photography aspects.

Apple has also shown indications that it’s getting more serious about augmented reality applications, however, and some of the new features that have been discovered in iOS 14, such as the new “Gobi” app that will be able to scan real-world objects in stores to display more information, seems like it will be a much better fit for the iPhone (and of course Apple’s upcoming AR Glasses) than for something like the iPad Pro, which is likely to focus more on some of the medical and interior design apps that Apple has already been showing off.

It’s also not clear whether the LiDAR Scanner will come to the entire iPhone 12 lineup, but we’re betting this will continue to be a “Pro” feature for now — and code already found in iOS 14 would seem to confirm this — with the baseline iPhone 12 models perhaps simply getting a bump to the triple-lens camera system found in the current iPhone 11 Pro.

[The information provided in this article has NOT been confirmed by Apple and may be speculation. Provided details may not be factual. Take all rumors, tech or otherwise, with a grain of salt.]